Most eCommerce founders think experimentation means running an A/B test on a button color and calling it a day. That mindset leaves serious revenue on the table. True growth experimentation is a disciplined, repeatable system that touches every layer of your funnel, from the first ad impression to post-purchase retention. It’s the engine behind the most impressive DTC scaling stories you’ve read about, and it’s far more accessible than most brands realize. This article breaks down what growth experimentation actually is, how to build a framework around it, and how to start running tests that compound into real, measurable results.

| Point | Details |

|---|---|

| Experimentation powers growth | Consistent, cross-funnel experiments fuel business breakthroughs in eCommerce. |

| Learning trumps winning | Every test, win or lose, reveals insights that improve future campaigns. |

| Frameworks matter | A disciplined, step-by-step process ensures reliable, repeatable results. |

| Velocity drives discovery | Testing often and rapidly yields faster growth than slow, perfectionist approaches. |

| Culture makes it stick | Team buy-in and openness to learn from failures turn growth experimentation into habit. |

Growth experimentation is not a synonym for A/B testing. It’s a broader operating system for finding what drives business growth, and then doing more of it, faster. Where a standard A/B test asks “does version B convert better than version A,” growth experimentation asks “what levers, across our entire funnel, can we pull to grow revenue, retention, and acquisition simultaneously?”

The distinction matters because most eCommerce brands run isolated tests. They tweak a landing page headline, measure clicks for a week, and move on. Growth experimentation treats the entire customer journey as a living lab. Every touchpoint, ad creative, email sequence, checkout flow, and upsell offer, is a potential experiment.

As growth hacking research from Stripe explains, growth experimentation is the core of growth hacking, encompassing hypothesis formation, rapid testing, and learning across all funnel stages, not just single product features. That scope is what separates brands that grow predictably from those that grow by accident.

Here’s what growth experimentation covers that standard A/B testing typically misses:

“The goal of growth experimentation isn’t to win every test. It’s to learn faster than your competition and build a compounding knowledge base that makes every future decision smarter.”

Dropbox famously used referral experimentation to grow from 100,000 to 4 million users in 15 months. Netflix runs hundreds of simultaneous experiments on everything from thumbnail images to recommendation algorithms. These aren’t flukes. They’re the result of treating experimentation as a core business function, not a marketing side project. If you want AI-driven CRO insights to layer on top of this, the foundation still has to be a disciplined experimentation culture.

Now that we define growth experimentation, let’s break down its guiding rules and the process you’ll follow.

Growth experimentation works because it follows a repeatable structure. Without that structure, you’re just guessing with extra steps. Here are the five core tenets that make it effective:

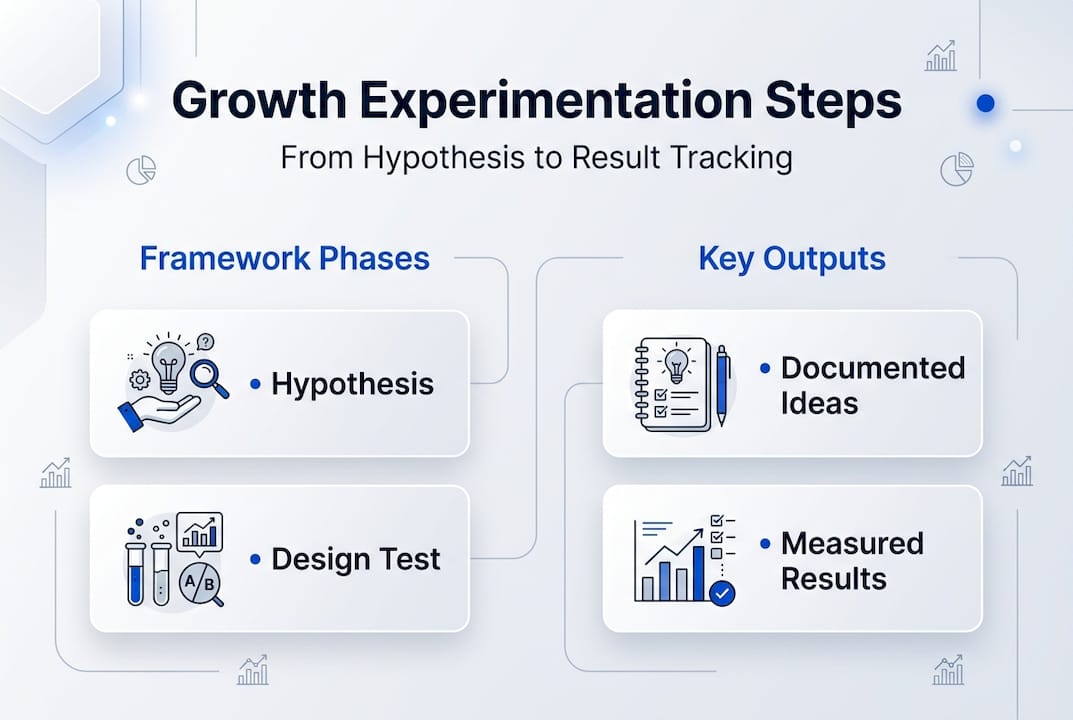

The practical framework looks like this:

| Phase | Action | Output |

|---|---|---|

| Hypothesis | Write a specific, testable prediction | Documented hypothesis |

| Setup | Run an A/A test to confirm baseline accuracy | Validated tracking |

| Execution | Launch experiment with defined runtime | Live test |

| Analysis | Review results against pre-set thresholds | Decision data |

| Decision | Scale, iterate, or discard | Next experiment |

Pro Tip: Before running any experiment, do an A/A test first. This means splitting traffic between two identical versions to confirm your measurement setup isn’t producing false positives. It’s a step most brands skip and then wonder why their results feel inconsistent.

For expert experimentation guidance on pre-registration and guardrails, GitLab’s engineering handbook is one of the most practical public resources available. Pair that with solid landing page test frameworks and you have a foundation most agencies never give their clients. Your ads CRO setup also needs to be dialed in before experiments can produce clean data.

It’s one thing to talk frameworks. Let’s see how brands actually experiment for growth.

The best way to understand growth experimentation is to see what it produces. Here’s a breakdown of real-world tests and what they revealed:

| Brand | Hypothesis | Metric Tested | Result |

|---|---|---|---|

| Dropbox | Referral incentive will accelerate signups | User acquisition rate | 4M users in 15 months |

| Netflix | Thumbnail variation affects content clicks | Click-through rate | Significant lift across catalog |

| Children’s supplement brand | New ad hook increases qualified traffic | Landing page CVR | Measurable conversion improvement |

Dropbox and Netflix didn’t stumble into growth. They built experimentation into their operating model, running tests at every funnel stage simultaneously. The children’s brand case study we worked on at Blue Bagels followed the same logic: form a hypothesis, isolate the variable, measure cleanly, and act on the data.

One of the most counterintuitive lessons from real-world experimentation is that failed tests are often more valuable than wins. A failed test tells you what your audience doesn’t respond to, which narrows your future hypothesis space and saves you from scaling something that would have plateaued anyway.

Pro Tip: Track your “loss library.” Every failed experiment should be documented with the hypothesis, the result, and the likely reason it didn’t work. Over time, this becomes one of your most valuable strategic assets.

For context on how compounding small wins works in practice, the supplement brand results we’ve documented show how a series of modest individual test lifts, say 8% here and 12% there, stack into 40% or more total funnel improvement over a quarter. That’s the compounding effect in action. It’s also why brands that struggle to scale often do so because they’re chasing one big win instead of building a system of small, consistent ones.

Armed with real-world examples, here’s how you can run experiments tailored to your own brand.

Setting up your first growth experiment doesn’t require a data science team. It requires discipline and a clear process. Here’s how to do it:

Common mistakes to avoid:

Your ads CRO guidelines and landing page best practices should inform which variables you prioritize first. High-traffic pages with clear conversion goals are the best starting points because they generate results faster.

Here’s the uncomfortable truth: most brands that “do experimentation” are really just running occasional tests and hoping one of them is a silver bullet. That’s not experimentation. That’s optimism with a spreadsheet.

The brands that actually build compounding growth through experimentation share one trait: they value the learning from a failed test as much as the revenue from a winning one. They’ve internalized that scale struggles almost always trace back to organizational habits, not creative quality or budget size.

The real killers of experimentation programs are internal. Siloed teams where paid media doesn’t talk to CRO. Short-term thinking that demands every test produce a winner within two weeks. Risk aversion that kills bold hypotheses before they’re ever tested. These aren’t technical problems. They’re cultural ones.

The fix isn’t a new tool or a bigger testing budget. It’s building a shared language around experimentation across your team, celebrating what you learn from losses, and committing to velocity over perfection. The brands winning in 2026 aren’t running better individual tests. They’re running more of them, more consistently, and learning faster than everyone else.

Growth experimentation works when it’s built on the right creative foundation. At Blue Bagels, we’ve spent four years building and testing the assets that feed your experiments: direct response ad creative, high-converting landing pages, presell pages, and UGC scripts, all grounded in real conversion data. Our ads CRO services are built around the same principles this article covers: hypothesize, test, learn, and scale. If you want to see what that looks like in practice, our real case studies show the actual results. And if your landing pages are the bottleneck, our landing page strategies give you the framework to fix them fast.

Growth experimentation involves continual, cross-funnel testing with a focus on learning, speed, and discovery across all funnel stages, not just validating isolated changes like a standard A/B test does.

Start with one high-impact element like an ad headline or landing page CTA, set clear metrics upfront, and follow the pre-registration process to keep your results clean and actionable.

Predefine your metrics and thresholds before launching. Success means hitting those benchmarks, but pre-registered criteria ensure that failures are just as informative as wins.

Testing velocity matters more than win rate, so run enough tests to learn quickly, but keep each experiment isolated enough that results stay clear and actionable.