TL;DR:

- Ad testing is a disciplined way to identify effective ad variations, improving ROI over simple scaling.

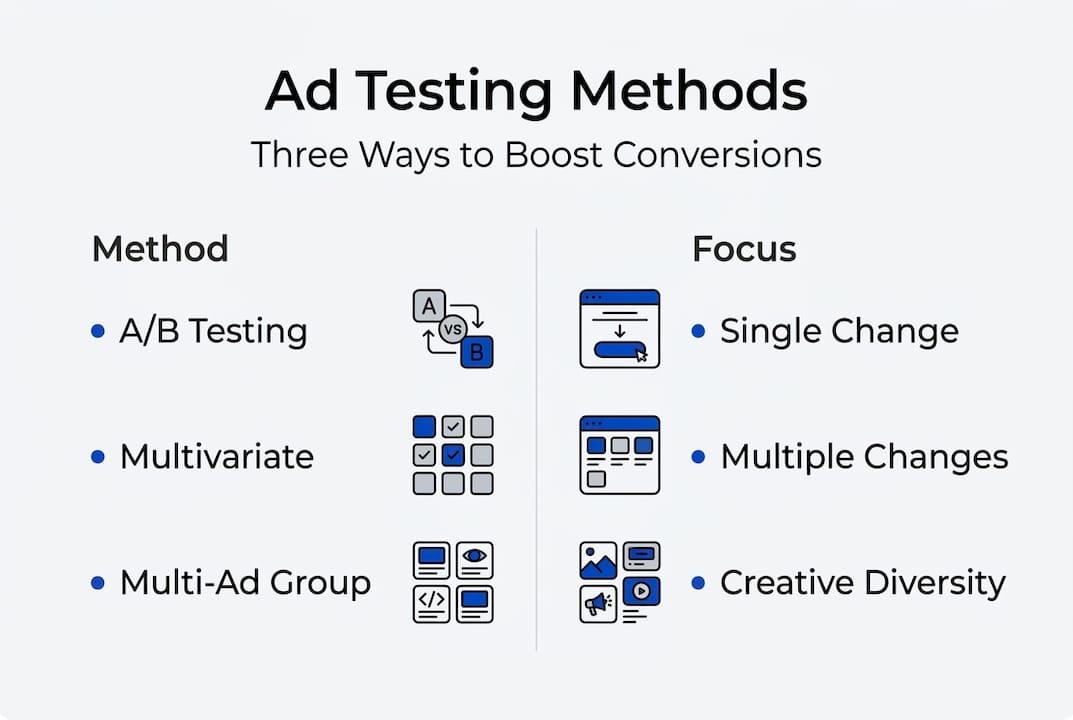

- Methodologies include A/B, multivariate, and multi-ad group testing, chosen based on traffic and goals.

- Testing concepts and hooks first yields bigger gains than minor creative tweaks, emphasizing strategic experimentation.

Most e-commerce marketers hit a growth wall and immediately reach for the budget dial. Spend more, reach more people, win more sales. It sounds logical, but it misses the real lever. Ad testing, the disciplined practice of running controlled experiments on your creative, is what separates brands that scale profitably from those that just burn cash faster. Done right, it tells you exactly what your audience responds to, so every dollar you spend works harder. This guide breaks down what ad testing actually is, which methods work for different traffic levels, and the expert strategies that drive real conversion gains in direct response campaigns.

| Point | Details |

|---|---|

| Ad testing beats scaling | Smart ad testing, not just increased spend, is the key driver for conversion rate gains. |

| Pick the right methodology | Choose A/B, multivariate, or multi-ad group testing based on traffic and campaign goals. |

| Test concepts first | Testing ad concepts and hooks before design tweaks delivers bigger performance improvements. |

| Use AI for speed | AI can dramatically increase your testing velocity, generating dozens of variants quickly. |

| Avoid common pitfalls | Control variables carefully and use proper sample sizes to ensure meaningful results. |

Ad testing is the process of running controlled experiments on your paid ads to find out which versions drive better results. Better results might mean higher click-through rates, lower cost per acquisition, or more purchases. The point is always the same: replace guessing with evidence.

For e-commerce brands running direct response campaigns, this matters enormously. You are not building brand awareness over years. You need people to click, land, and buy now. Every ad element, from the hook in the first three seconds of a video to the color of a call-to-action button, affects whether that happens.

Ad testing is fundamentally different from scaling. Scaling takes what you have and spends more on it. Testing finds out what actually works before you commit more budget. Brands that test first and scale second consistently outperform those that do the reverse. If you want a broader view of how testing fits into growth strategy, this growth marketing guide is a solid starting point.

Here is what ad testing covers in practice:

The core methodologies are A/B testing, which changes one variable at a time for clean measurement, multivariate testing, which shifts multiple variables simultaneously and requires high traffic volumes, and multi-ad group testing, which tests broader creative cross-sections across campaign structures.

“Ad testing is not a one-time project. It is an ongoing engine that compounds over time. Each test you run adds to a body of knowledge about your audience that no competitor can easily copy.”

When done consistently, ad testing improves your return on ad spend (ROAS) by eliminating underperforming creative faster and doubling down on what converts. It also informs your broader creative strategy, revealing patterns in what your audience responds to across channels.

With the definition clear, let’s break down the three major methodologies and compare them so you can pick the right one for your situation.

A/B testing is the most common and most accessible method. You create two versions of an ad, change exactly one variable, run both to similar audiences, and measure which performs better. Because only one thing changes, you know precisely what caused the difference. This is the right starting point for most e-commerce brands.

Multivariate testing runs multiple variable changes at the same time. It can reveal interaction effects, meaning you learn not just which headline works but which headline works best with a specific image. The catch is that it requires significantly more traffic to reach statistical significance. Running multivariate tests on low-volume campaigns produces noise, not insight.

Multi-ad group testing is less about controlled experiments and more about directional learning. You run different creative concepts across separate ad groups and let performance data tell you which direction to pursue. It is faster and messier than A/B testing, but it is useful for early-stage creative exploration.

| Method | Variables changed | Traffic needed | Best for |

|---|---|---|---|

| A/B testing | 1 | Low to medium | Precise variable measurement |

| Multivariate | Multiple | High | Interaction effects, scaling brands |

| Multi-ad group | Concepts | Any | Early-stage creative direction |

Choosing the right method depends on two things: your traffic volume and your campaign goal. If you are trying to understand why something works, use A/B. If you are trying to find the best combination at scale, use multivariate. If you are still figuring out which creative concept resonates, use multi-ad group testing.

Here is a simple process for setting up an A/B test:

Pro Tip: Do not call a test early because one version looks like it is winning after two days. Premature conclusions are one of the most expensive mistakes in ad testing. Wait for statistical significance before making decisions.

For more on building high-converting ad techniques into your creative process, or for a deeper look at funnel optimization strategies that complement your testing program, both resources are worth your time.

Now that you know what and how to test, these expert strategies will show you where to focus for the highest impact.

Most brands test the wrong things first. They swap out button colors, try a new font, or change the background image. These tweaks occasionally move the needle, but they rarely produce the kind of lift that changes a campaign’s trajectory. The real breakthroughs come from testing at the concept level.

A concept test asks a fundamentally different question. Instead of “does this headline work better than that one,” it asks “does this entire angle on our product resonate more than a completely different angle?” You might test a pain-focused ad against a transformation-focused ad. Or a social proof angle against a curiosity-driven hook. The winner tells you something meaningful about how your audience thinks about their problem.

Hooks deserve special attention. The hook is the first two to three seconds of a video or the first line of copy. It determines whether someone stops scrolling or keeps moving. Testing hooks is one of the highest-leverage activities in direct response advertising because a better hook improves everything downstream.

Here is where AI becomes genuinely useful. Testing velocity matters: AI can help you generate 50 or more variants per month, which means faster learning cycles and more data to act on. Brands that test more variants find winners faster. That is a compounding advantage.

However, there is an important caveat. The same analysis found that AI-generated images with an obvious AI look consistently underperform on click-through rates. Audiences recognize them and trust them less. Use AI for speed and volume in copy and concept generation. For visuals, prioritize authentic photography, real UGC, or well-produced video.

Key principles for expert-level ad testing:

Pro Tip: Keep a “swipe file” of your winning hooks organized by angle type. Over time, you will start to see patterns that tell you exactly what your audience responds to at an emotional level.

For brands that want expert support building this kind of systematic approach, our ad CRO services are built around exactly this methodology. You can also learn more about direct response ad creative principles and how lead conversion CRO steps connect your ad testing to full-funnel performance.

After understanding strategies, here is how to put ad testing into practice and avoid costly mistakes.

Setting up a test correctly from the start saves you from drawing wrong conclusions later. The most common mistake is changing more than one variable per test. If you change the headline and the image at the same time, you cannot know which change drove the result. Discipline here is non-negotiable.

Here is a step-by-step process for running ad tests in e-commerce campaigns:

| Common pitfall | What goes wrong | How to fix it |

|---|---|---|

| Testing too many variables | No clear winner signal | One variable per test |

| Small sample sizes | False positives | Set minimums before launch |

| Ending tests early | Misleading data | Wait for significance |

| No hypothesis | Random testing | Write the hypothesis first |

| Ignoring losers | Lost learning | Document all results |

“The brands that win at paid media are not the ones with the biggest budgets. They are the ones with the best learning systems. Every test, win or lose, is an asset.”

Integrating ad testing into your ongoing campaign management means treating it as a standing agenda item, not a one-off project. Allocate a portion of your budget specifically for testing, separate from your scaling budget. This protects your learning program from getting cut when performance pressure rises.

For inspiration on what well-structured creative looks like before you test it, browsing static ad examples can help you understand the baseline quality level your tests should start from. The core A/B testing methodologies are your structural foundation, but consistent execution is what turns methodology into results.

Here is what most ad testing articles will not tell you: the majority of e-commerce brands are not actually testing. They are tweaking. There is a real difference.

Tweaking is changing a button color or swapping a product image and calling it a test. Real testing means putting a genuinely different concept, angle, or hook into the market and being willing to be wrong about what you thought would win. That takes courage, because bold tests can fail visibly.

The brands we see achieving serious conversion uplift are the ones running five to ten meaningful concept tests per month, not five variations of the same safe ad. They build testing into their culture, not just their process. They celebrate learning from a losing test as much as a winning one.

Even sophisticated growth teams underestimate creative testing velocity as a growth lever. They invest in audience research, funnel audits, and landing page optimization, all of which matter, but they leave the creative side on autopilot. That is where the biggest untapped gains usually sit. If you want to understand why brands struggle to scale, creative stagnation is almost always part of the answer.

At Blue Bagels, we have spent four years building and testing direct response creative for e-commerce and DTC brands across Meta, TikTok, Google, and YouTube. Our ad performance services are built around the same concept-first, hook-first testing methodology covered in this guide. We do not guess. We test, measure, and scale what works. If you want to see what that looks like in practice, our display case studies show real campaign results from real brands. And if your landing pages are not converting the traffic your ads send, our landing page optimization work closes that gap. Reach out if you want a performance audit or a tailored testing strategy built around your specific goals.

AI can speed up variant generation to 50 or more per month, enabling faster learning cycles and more opportunities to find winning creative.

A/B testing changes one variable at a time for clean measurement, while multivariate testing changes multiple variables simultaneously and requires significantly higher traffic volumes to produce reliable results.

Testing concepts and hooks first almost always produces larger performance improvements than tweaking individual creative elements like images or button colors.

Control only one variable per test, set a minimum sample size before you launch, and define your success metric clearly before the test begins.